The Future of Creation

It’s been quite some time since I put pen to paper for Revolutionary Tech. Pandemics, Wars, Rocket Launches… Nothing really pulled me to the page. But today, I was aroused once again by my first love: Artificial Intelligence. Things seem to be reaching a boiling point…

The battle lines are being drawn in the upcoming war for the future of generative AI. For the uninitiated, this refers to software that can be used to generate “new” content from a relatively simple input. From a single string of text, you may be able to get a song, a story, or a work of graphic art created by an algorithmic author in less time than it takes to look up your favorite creator on Instagram. There have been some strides made in this technology over the past year that are causing quite a stir.

The business community is all abuzz about Chat GPT 3.5. And for good reason. The latest iteration of Open AI’s natural language model has demonstrated some incredible powers. The base version responds to complex mathematical queries and can rap educational verses in the style of Eminem. With some effort, the same model can be repurposed to handle much of what humans are relied on to do today, reducing the need for labor and even reimagining entire service categories. Once glimpse of this potential put even the mighty Alphabet on high alert.

But one group in particular looks set to take the first stand against the new technology: graphic artists. It’s a fight that’s worth taking note of. One that touches on some of my favorite topics: identity, intellectual property, digital rights and collective use of data. But, let’s start at the beginning.

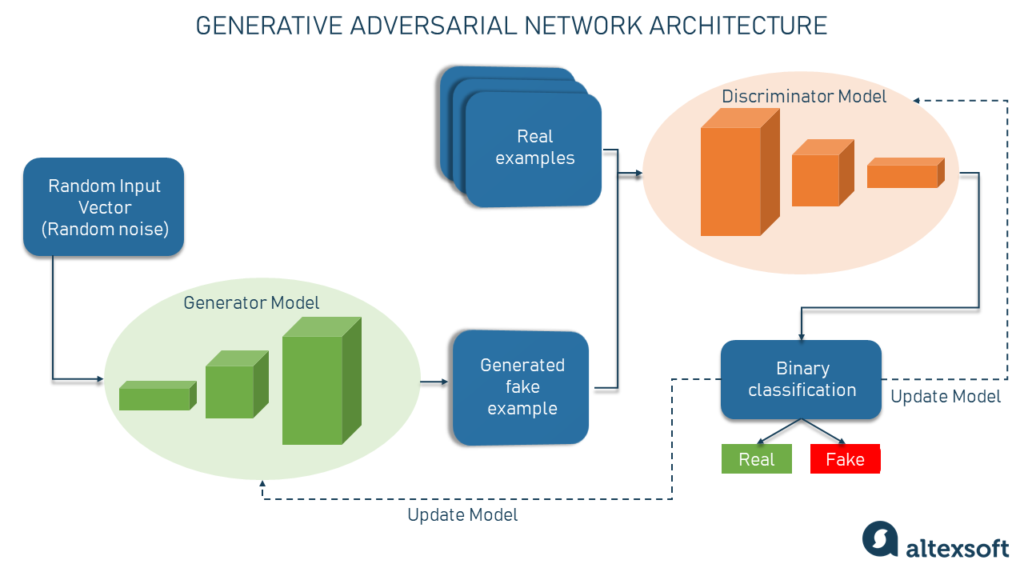

A host of graphic art generation models have been created over the past few years. Dall-E, Stable Diffusion, Mid Journey… Today there are dozens of algorithmic graphic designers to choose from; both open sourced and commercial. While the details of construction vary, the basic process for manufacturing one of these is as follows: Pieces of art are collected from the internet or some other repository of works and are labeled by human workers or volunteers. This means that a single text description and/or many text based tags are applied to the works in order to help a machine interpret them. Once labeled, the images are used to train a model that tries to generate new images that might match those tags or text labels in the future.

So, images are used to create the model and then the model creates new images. This model will have developed a concept of what an individual tag or description means. For example, it might know what a dog is or what a watercolor image looks like based on what it has seen before. When given a prompt that combines labels from different sets of images, it will labor to find the right balance of distinguishing features.

Astute readers may recognize the conflict inherent in this process. AI, in the best of times, accomplishes something that the owners and contributors of the data are all happy with. Take Netflix as an example. You contribute data to Netflix through your movie selections. Netflix takes that data, along with the data of their other customers, and builds a model called a recommender system. Then, the recommender can offer decent options for what to watch next when you finish a binging session. You’re happy and Netflix is happy. That isn’t always true in the case of generative AI art.

With a generative AI, the artists who supply the initial data aren’t considered. A company they’ve never heard of collects all the work they can get their hands on en masse by crawling the various websites and online galleries that contain them. The company then creates the model and begins churning out new work by attempting to mimic the artists that it has seen. The artists themselves are none the wiser… Until they see their style of artwork in your profile pic. To add insult to injury, the company may even charge the users for access to the model; making a profit off of the data they consumed.

The conflicts are clear. Not only is the artist not consulted during the process, the AI company is now vying against the artist for customers worldwide. As you can imagine, this hasn’t gone over well. Twitter, Medium, YouTube, and every other corner of the internet has filled with artists railing against this brave new world. Indeed, the internet has fielded arguments from both sides. The world’s technophiles have engaged in the debate to praise the AI companies and decry the neo luddites’ protectionism.

In one sense, AI is already at a disadvantage. The current policy of the United States copyright office is to not offer copyright protection to any works that were not created by human beings. This bars any machine generated content from receiving a copyright. Dr. Stephan Thaler, a computer scientist from St. Louis Missouri, tested this restriction in 2018 when he attempted to gain copyright protection for a computer generated work, “A Recent Entrance to Paradise”. His application was denied; a denial which was affirmed in court when Thaler attempted to sue the USPTO and lost in 2021. Score 1 for humanity.

But, that was only round one of the conflict. In November of last year, programmer, designer and lawyer Matthew Butterick launched a class action lawsuit against CoPilot, the Microsoft and OpenAI created code writing tool. His claim? That CoPilot is harvesting code from open source contributors and then recreating that code in commercial products without proper attribution. By doing so, Butterick argues that CoPilot violates the open source licenses of the projects whose code it leverages. For CoPilot’s part, they seem to see this as part of a Fair Use of created content; an argument that is foundational to machine learning. If CoPilot prevails in this case, they could make that foundation much more stable. If they fail, it could create a major hurdle for future development of the technology.

The Fair Use doctrine is a principle in United States copyright law that allows for the limited use of copyrighted material without the need for permission from the copyright holder. The doctrine is intended to balance the interests of copyright holders with the public’s interest in the free flow of information and ideas. When applied to generative AI, the question is whether the usage of copyright protected material as training data counts as Fair Use. It’s a tricky question.

First off, using a work in its entirety is a red flag, which the training process itself does do. However, when the model actually creates an output for a user, it generally won’t replicate an existing work. Second, a fair use defense can’t be used in some cases like creating a commercial derivative work. That issue is also pretty hard to parse when dealing with AI. Is the content that a model produces derivative or transformed? It also doesn’t help that the concept of transformation isn’t very well defined in law.

This leads us to the third legal battle we’ll be exploring today, this time taken to the level of the Supreme court. It’s actually part of a much older conflict from the art world and, in fact, doesn’t involve artificial intelligence at all. Still, it’s a case that those who work on the defense of and the litigation against AI companies are studying with intensity. Enter: Andy Warhol.

For those who weren’t alive and consuming media in the ‘70 or ‘80s, Andy Warhol was one of the most commercially successful artists of that era and is viewed as a founding father of pop art. Warhol is well known for creating pieces that took photos of everyday objects, celebrities and news events and reimagining them through his own lens. In 1984, he painted an image of Prince based on a photograph taken by Lynn Goldsmith. Today, Goldsmith is suing the Andy Worlhol foundation in order to establish that this work is a violation of Goldsmiths copyright in a case that has made it all the way to the United States Supreme Court.

For AI creation enthusiasts, this case is a microcosm of what’s taking place today with machine assisted creation. They submit that Andy Warhol’s piece was an example of the fair use of an existing copyright. That his work transformed the original photograph enough that it became a new piece. And further, that this is the same process being undertaken now by machines. But, while the Supreme court will soon make a decision on the Goldsmith case, it’s not obvious that AI creation will be able to hide behind that same defense. Is Midjourney the modern equal of the paintbrush? Is the data harvesting of a machine equivalent to the interpretation of a human mind? That remains to be seen.

At any rate, the genie of AI assisted creation is out of the bottle. For artists who make their work accessible to the internet, the question is: what rights do you have as a creator and what rights do algorithms have to learn from your work. By placing your portfolio online, does that give anyone in the world the ability to access your style by referencing it in Stable Diffusion? Or does that reinterpretation need to be completed by a biological neuron in order to be legal? Where the courts land on these issues will define the future of artistic expression for the next decades. And may well determine who or what is doing the expressing.

Social Media Profiles